I've found that the overwhelming majority of online information on artificial intelligence research falls into one of two categories: the first is aimed at explaining advances to lay audiences, and the second is aimed at explaining advances to other researchers. I haven't found a good resource for people with a technical background who are unfamiliar with the more advanced concepts and are looking for someone to fill them in. This is my attempt to bridge that gap, by providing approachable yet (relatively) detailed explanations. In this post, I explain the titular paper - Complementary Learning Systems Theory Updated.

Motivation

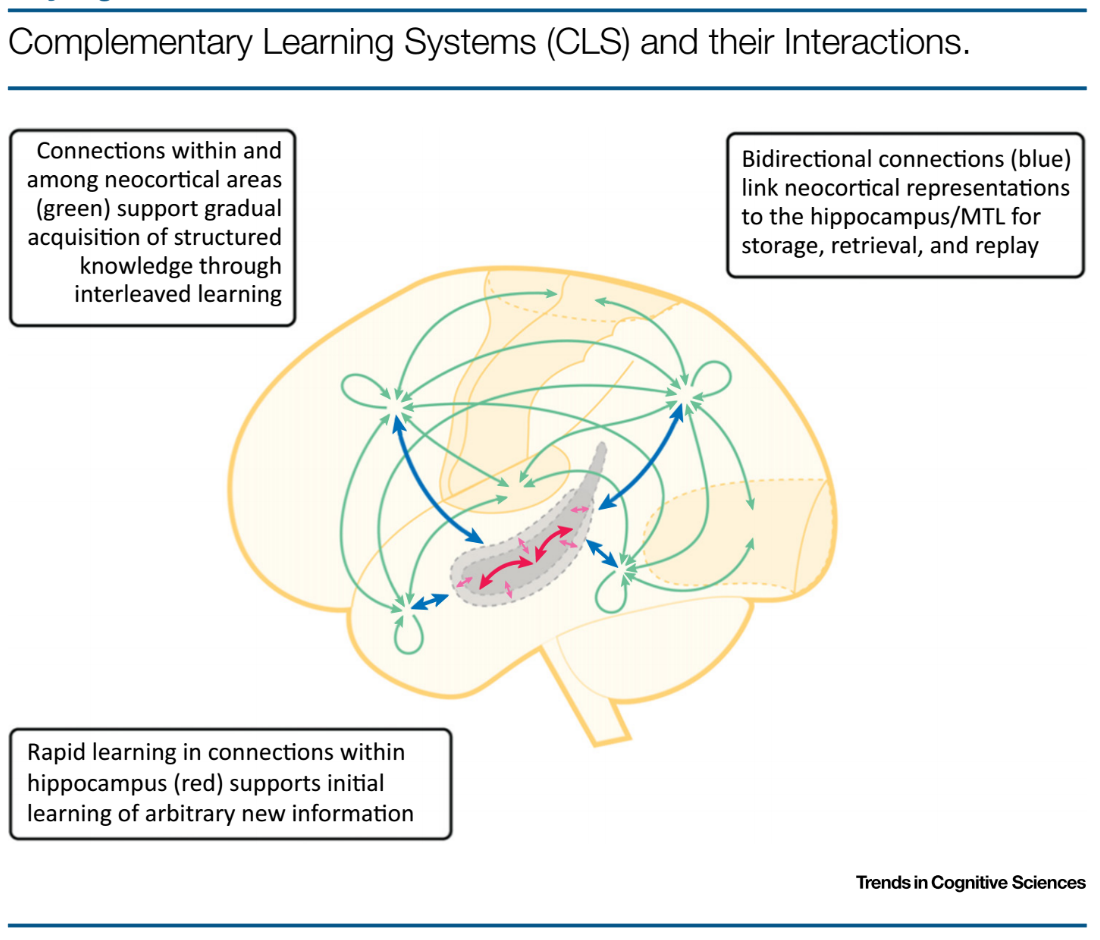

Over twenty years ago, McClelland, McNaughton and O'Reilly proposed a theory to explain a substantial and expanding body of evidence regarding how the mammalian brain learned. Called Complementary Learning Systems (CLS) theory, it held that intelligent agents must possess two learning systems. The first, instantiated in the mammalian hippocampus, would quickly learn specific experiences using non-parametric representations of information. The second, instantiated in the mammalian neocortex, would more slowly learn generalizable structured knowledge using a parametric representation. Over time, information in the first system is consolidated and transfered to the second through a process called replay. As the name suggests, experiences stored in the first system are repeatedly passed to the second system for meaningful structure to be extracted, much like how neural networks repeatedly receive the same data while training. The image below shows

But in the intervening years, evidence has emerged that questions the CLS theory. This review aims to update the CLS theory to align it with empirical truth, and to suggest

Background

Corrections

Future Directions

Summary

Notes

I appreciate any and all feedback. If I've made an error or if you have a suggestion, you can email me or comment on the Reddit or HackerNews threads.